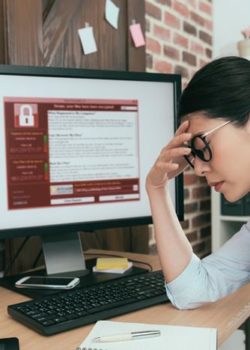

A startling 87% of UK organisations are prone to cyber-attack, according to new research from Microsoft and academics at Goldsmiths, University of London.

The news comes at a time when there is increasing worry about the use of artificial intelligence by criminals.

Only 13% of organisations—across both the public and private sectors—are “resilient” to attack. Most, 48%, are deemed “vulnerable”, while 39% are classified as “at high risk”.

The report says AI-powered attacks are capable of threatening supply chains, disabling essential systems and leaking sensitive data.

Paul Kelly, director of the security business group at Microsoft, says some criminals are “tooling up” with AI to “increase the sophistication” of their attacks.

“The same AI technologies can help leaders better secure their organisation and tip the balance back in their favour,” Kelly says.

According to the report, Mission Critical, organisations categorised as “high risk” are those where leadership is “not regularly informed” about cyber-threats, leading to a “lack of leadership engagement when attacked”.

They are unlikely to have a business continuity plan and less likely to regularly assess and monitor “vulnerabilities” in their operations.

Vulnerable organisations are those in which cybersecurity is considered a priority by only a small number of people. They assess vulnerabilities, but lack “proactive detection and prevention”.

The role of governance

The report makes a number of recommendations for boosting “resilience”, with governance a key component.

“Policymakers should continue to collaborate with businesses of all sizes and across all sectors—from healthcare to manufacturing, and from military to finance—helping them operationalise simple outcomes-based guidance that encourages the safe deployment of AI in cybersecurity.”

Writing for Board Agenda earlier this year, cybersecurity expert Federico Charosky wrote that boards should be asking questions about the maturity of cybersecurity in their organisations, considering whether budgets were adequate, measures of attack and defence and whether investments already made were being used “wisely and to the maximum extent”.

It is a year since Europol, the European agency for police cooperation, said “malicious use cases” of tools such as ChatGPT were already possible, making headlines around the world. Businesses may want to accelerate their preparations.